The pre-training task requires the model (i.e., the discriminator) to then determine which tokens from the original input have been replaced or kept the same.

While this makes a bit of sense, it doesn’t fit as well with the entire context. For example, in the below figure, the word “cooked” could be replaced with “ate”. Instead of corrupting the input by replacing tokens with “” as in BERT, our approach corrupts the input by replacing some input tokens with incorrect, but somewhat plausible, fakes. Inspired by generative adversarial networks (GANs), ELECTRA trains the model to distinguish between “real” and “fake” input data. ELECTRA is being released as an open-source model on top of TensorFlow and includes a number of ready-to-use pre-trained language representation models.ĮLECTRA uses a new pre-training task, called replaced token detection (RTD), that trains a bidirectional model (like a MLM) while learning from all input positions (like a LM). ELECTRA’s excellent efficiency means it works well even at small scale - it can be trained in a few days on a single GPU to better accuracy than GPT, a model that uses over 30x more compute. For example, ELECTRA matches the performance of RoBERTa and XLNet on the GLUE natural language understanding benchmark when using less than ¼ of their compute and achieves state-of-the-art results on the SQuAD question answering benchmark. ELECTRA - Efficiently Learning an Encoder that Classifies Token Replacements Accurately - is a novel pre-training method that outperforms existing techniques given the same compute budget. In “ ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators”, we take a different approach to language pre-training that provides the benefits of BERT but learns far more efficiently.

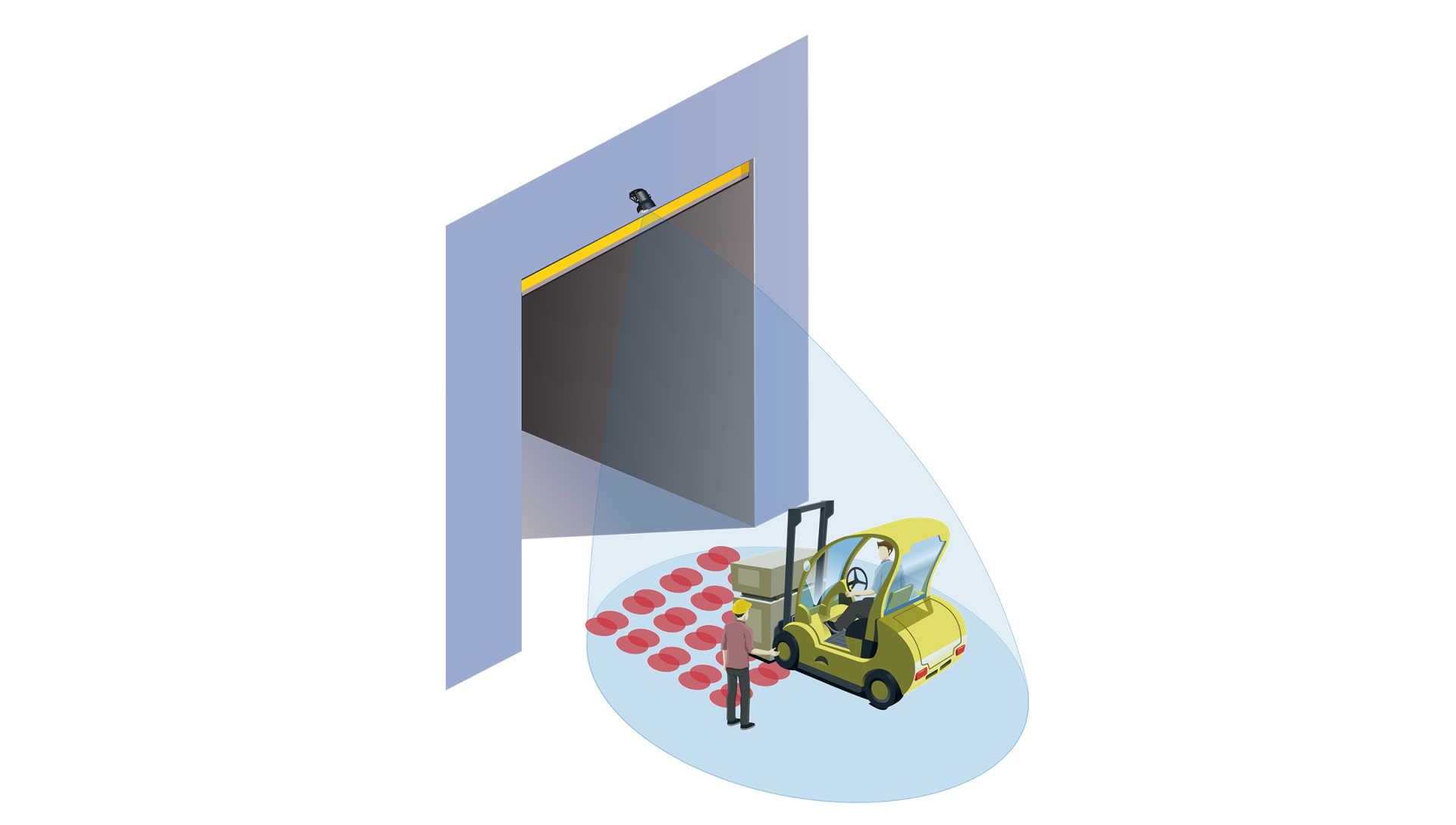

Right: Masked language models (e.g., BERT) use context from both the left and right, but predict only a small subset of words for each input. Left: Traditional language models (e.g., GPT) only use context to the left of the current word. Arrows indicate which tokens are used to produce a given output representation (rectangle). Instead of predicting every single input token, those models only predict a small subset - the 15% that was masked out, reducing the amount learned from each sentence.Įxisting pre-training methods and their disadvantages. However, the MLM objective (and related objectives such as XLNet’s) also have a disadvantage. MLMs have the advantage of being bidirectional instead of unidirectional in that they “see” the text to both the left and right of the token being predicted, instead of only to one side. These methods, though they differ in design, share the same idea of leveraging a large amount of unlabeled text to build a general model of language understanding before being fine-tuned on specific NLP tasks such as sentiment analysis and question answering.Įxisting pre-training methods generally fall under two categories: language models (LMs), such as GPT, which process the input text left-to-right, predicting the next word given the previous context, and masked language models (MLMs), such as BERT, RoBERTa, and ALBERT, which instead predict the identities of a small number of words that have been masked out of the input. Recent advances in language pre-training have led to substantial gains in the field of natural language processing, with state-of-the-art models such as BERT, RoBERTa, XLNet, ALBERT, and T5, among many others. Posted by Kevin Clark, Student Researcher and Thang Luong, Senior Research Scientist, Google Research, Brain Team